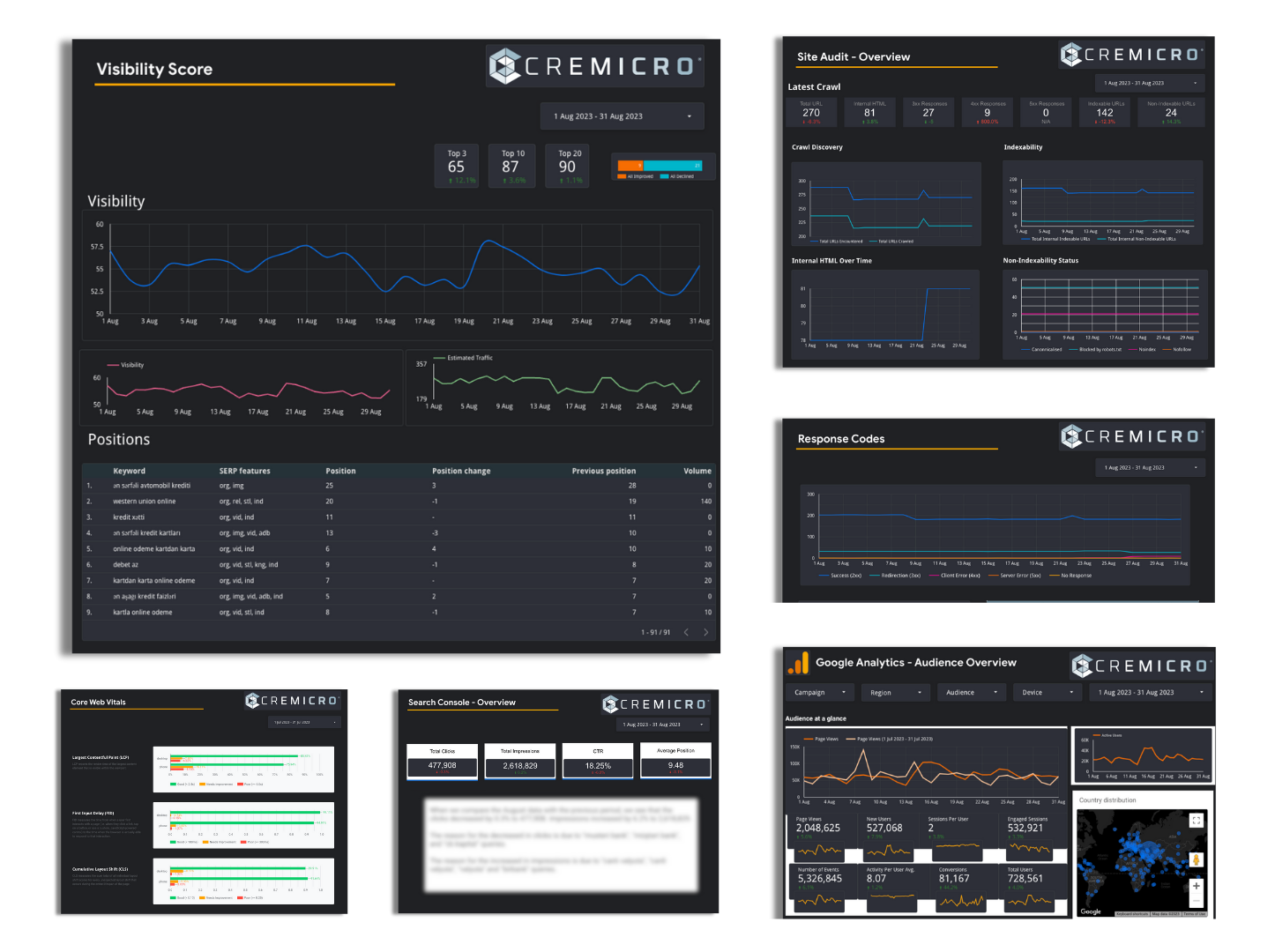

Do you want to reach your target audience and grow your business? Our digital marketing strategies will help your business stand out from the competition and generate high-quality leads.

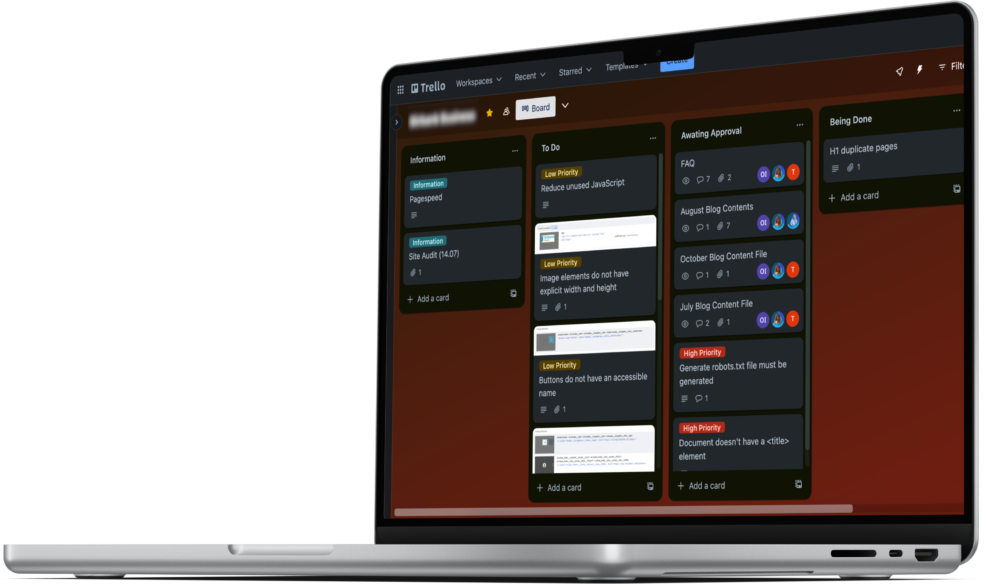

We are experts in converting your target audience into potential customers, from determining and implementing a strategy suitable for your brand, making special plans for you and starting production.

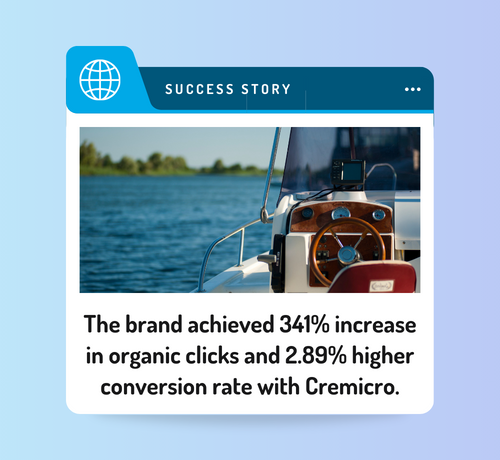

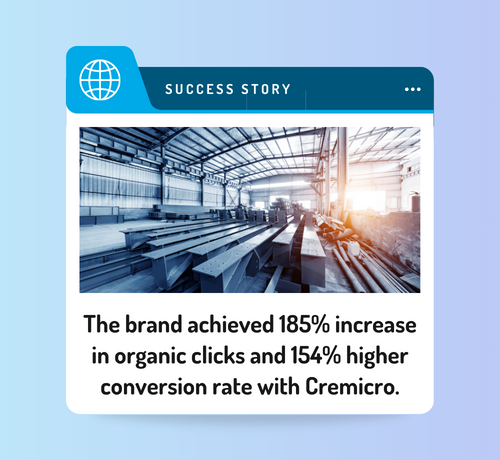

With Cremicro, you reach the top step by step from your current level, regardless of your industry and business size. As long as your business has a strong digital presence, your customers will always find you, expanding your market reach!